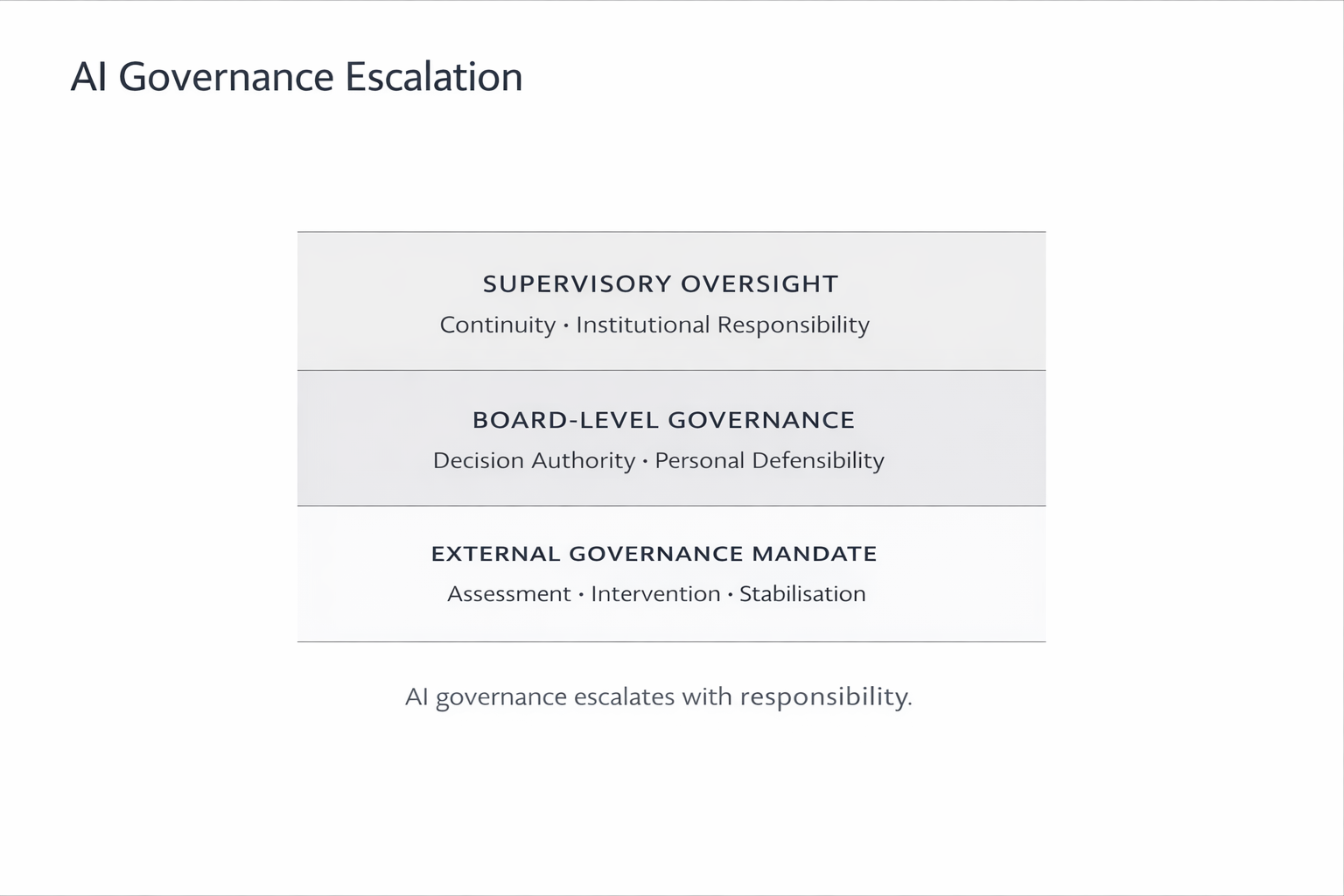

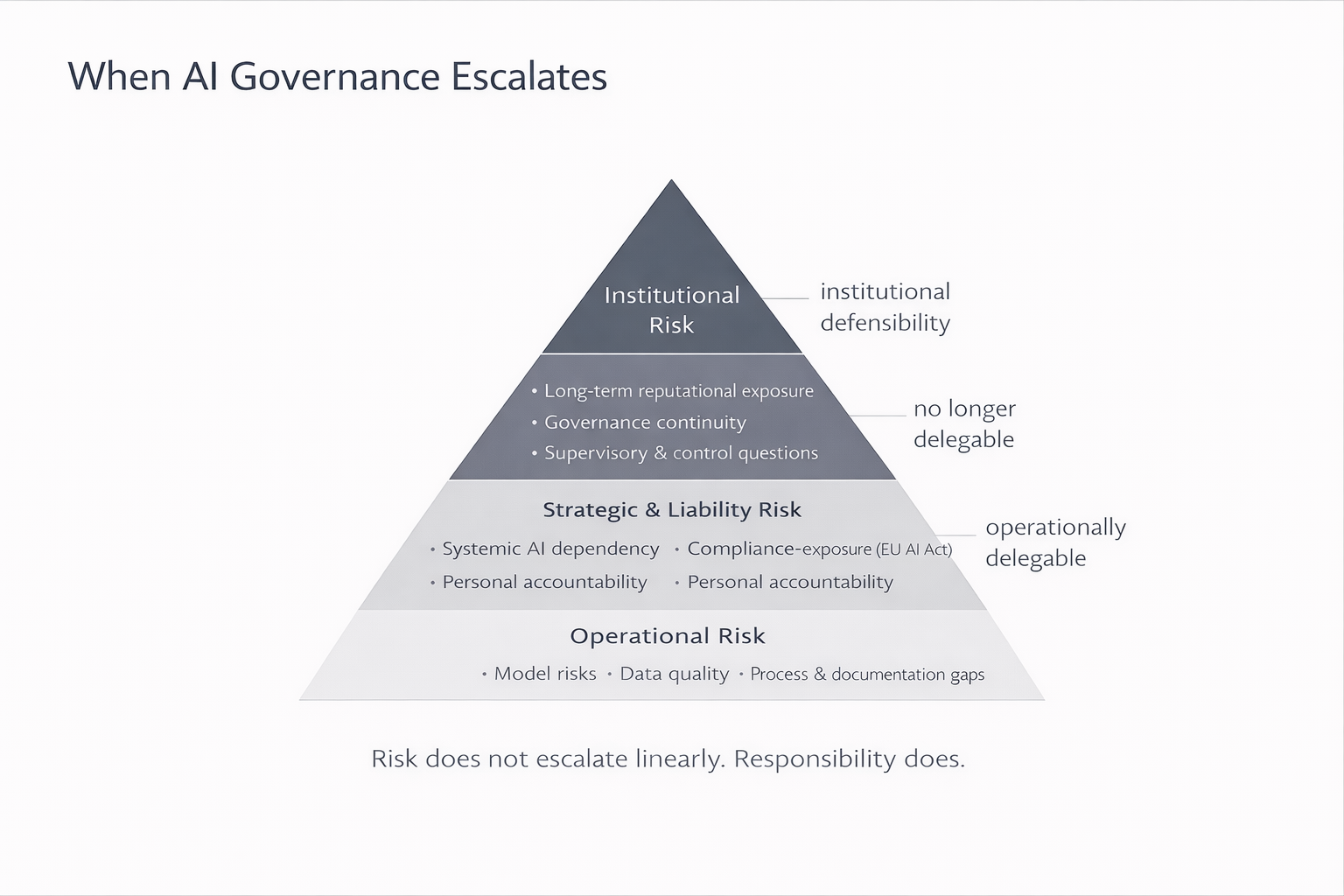

Situations where AI governance becomes non-delegable — and accountability becomes personal

Boards do not engage Patrick Upmann for strategy development, framework alignment, or advisory workshops. They engage him when AI governance becomes time-critical, personally exposed, and legally sensitive — and must hold under audit, regulatory scrutiny, incidents, or liability pressure. These are the situations in which a board-level mandate becomes necessary.

Audit or regulatory pressure

Situation

An audit is announced, expanded, or escalated. Regulators request clarification, evidence, or named accountability for AI-related decisions.

What fails most often

- decision authority exists only implicitly

- accountability is diffused across functions

- documentation is not admissible under scrutiny

- governance relies on informal coordination

Typical consequence

Boards realise too late that governance would not survive formal review.

Mandate initiated

→ Board AI Governance Stress Test

AI governance is not a service model.

It is a responsibility model.

Incident anticipation or escalation

Situation

AI-related incidents have occurred, are under internal investigation, or are expected to escalate.

What fails most often

- decisions were made but are not defensible

- escalation paths depend on individuals

- governance needs to be reconstructed after the fact

Typical consequence

Accountability becomes personal while evidence is incomplete.

Mandate initiated

→ Pre-Incident Accountability & Evidence Review

Risk does not escalate linearly.

Responsibility does.

Personal liability exposure

Situation

Board members, executives, or accountable officers face potential personal exposure related to AI systems, decisions, or oversight obligations.

What fails most often

- unclear boundaries of decision authority

- accountability is shared but not accepted

- evidence does not meet legal or regulatory standards

Typical consequence

Personal liability risk emerges without defensible governance structures.

Mandate initiated

→ Pre-Incident Accountability & Evidence Review

Governance deadlock at executive or board level

Situation

AI governance decisions are blocked, politicised, or continuously postponed across Legal, IT, Compliance, Risk, and Business functions.

What fails most often

- no accepted decision authority

- committees without mandate or escalation power

- governance exists, but cannot be enforced

Typical consequence

Time pressure increases while accountability remains unresolved.

Mandate initiated

→ Interim AI Governance Decision Lead

What boards typically ask at this point

At this stage, boards and executives usually ask questions such as:

- Who is actually accountable — by name?

- Which AI governance decisions are defensible today?

- What would fail under audit, regulatory inquiry, or court review?

- What must exist before regulators or auditors ask for it?

This is the point where mandates begin.

Next steps

If one or more of these situations apply, a mandate can be initiated directly at board or executive level.

- View Mandate Types

- Request a Board Mandate

- (optional) About Patrick Upmann

Governing principle

If governance needs to be reconstructed after the fact, it never existed.

This principle defines when Patrick Upmann’s work begins —

and when AI governance becomes non-delegable.